|

You Are Not a Loan Right before Covid-19 disrupted our lives, I assembled a group of activists and academics to discuss the crisis of higher education and what was next for the growing movement to cancel student debt and make college and university tuition free. The 45-minute film “You Are Not a Loan” is a record of this encounter, which took place on February 7, 2020.  After nearly a decade of grassroots organizing, the Debt Collective, a union for debtors that I helped found, succeeded in making student debt a central issue in the Democratic presidential primary. Sens. Bernie Sanders and Elizabeth Warren campaigned aggressively on canceling various amounts of student debt while expressing varying commitments to higher education as a right. Our movement had entered a pivotal moment that seemed worth documenting. We needed to figure out how to advance and expand our agenda. Of course, we had no idea what was just around the corner. In many ways, “You Are Not a Loan” is more resonant now than when we shot it. The pandemic dealt this country’s fragile higher education system a potentially existential blow, making the issues and solutions raised in the film more urgent and mainstream. Meanwhile, the call to cancel debts — student loans and also medical debt, past-due mortgages, and back rent — can now be heard emanating from struggling communities and echoed by progressive representatives in Congress. Even President Joe Biden has embraced the necessity of student debt cancellation. Though his proposal is inadequate — he has promised $10,000 of “immediate” relief along with more substantial cancellation for students who attended certain schools and meet certain income thresholds — it is a notable development for a former senator of Delaware, the credit card capital of the world, and a man who played a key role in pushing legislation that rolled back bankruptcy protections for student borrowers. The pandemic dealt this country’s fragile higher education system a potentially existential blow, making the issues and solutions raised in the film more urgent and mainstream. The idea for this project emerged out of conversations with Paul Holdengräber and his team at Onassis Los Angeles, a newly opened center for dialogue around social change and justice. With their support, I was able to convene a group that included Debt Collective organizers, student debtors, and esteemed scholars, including political theorist Wendy Brown, historian Barbara Ransby, economist Stephanie Kelton, and others. The dialogue that ensued is personal and philosophical, historically grounded and engagingly hypothetical. The film offers an intimate view of the ongoing and growing grassroots struggle to transform our broken, profit-driven education system and also reveals some of the challenges facing the effort. There are insightful and humorous moments as participants attempt to speak and strategize across cultural and class divides. As a documentary filmmaker, I’ve long been a fan of political cinema from the 1960s, especially fly-on-the-wall accounts of impassioned meetings and intimate conversations where people share grievances and plan next steps. I share this sensibility with my main collaborator on this project, the multitalented Erick Stoll, who shot and edited the project. We wanted to give viewers a sense of being immersed in an activist milieu while showing the ways that these milieus naturally create space to ask big questions, blurring the supposed divide between theory and practice. As the brilliant historian Robin D. G. Kelley once wrote: “Social movements generate new knowledge, new theories, new questions. The most radical ideas often grow out of a concrete intellectual engagement with the problems of aggrieved populations confronting systems of oppression.” That has certainly been my experience collaborating with the Debt Collective. With the Debt Collective’s core demands of student debt cancellation and free college being discussed on the national stage, my aim was to prompt the group to step back and reflect on the big picture to help us figure out how to keep moving forward. How did we get to this point? What would truly free college — meaning free as in cost and free as in aimed at liberation — be like? How have racism and capitalism sabotaged public education as we know it? What do we mean by the word “public”? Where is our power to change things? Little did we know how much things were about to change for the worse. Within a matter of weeks, college campuses across the country would shut down, and tens of millions of jobs would disappear, causing students to question the value of Zoom learning and pushing countless people deeper into debt. An already dire situation suddenly became much worse. In the wake of the pandemic, additional budget shortfalls are already leading to hiring freezes, faculty layoffs, tuition hikes, and mounting student debt. “You Are Not a Loan” puts current events and the deepening crisis of higher education into a broader context. It explores past decisions that set us on our current path while pointing toward a utopian horizon we can still reach for — a horizon where education is decommodified and democratized, available to all who want to learn. Most importantly, it offers a reminder that we will only shift course if regular people organize and fight back. The Debt Collective proposes one approach that we hope will assist such an endeavor. We believe that engaging debtors in campaigns of strategic economic disobedience (a concept I discussed at length with Jeremy Scahill on the Intercepted podcast) can yield novel tactics to tackle inequality and strengthen other established social movement strategies. Just like workers need labor unions to secure higher wages and benefits, borrowers need debtors’ unions that can engage in collective campaigns to secure debt write-downs and cancellation and the provision of social services, such as free college and universal health care, to ensure that no one is forced to take on debt to survive. The dominant idea that debts have to be repaid is a bedrock principle of modern financial capitalism — as long as those debts are held by regular people and not bankers, big corporations, or Donald Trump, of course. By insisting otherwise, we pose a profound challenge to the economic status quo. Putting our principles in action, the Debt Collective launched the first student debt strike in this country’s history in 2015, ultimately helping tens of thousands of borrowers defrauded by predatory for-profit colleges secure over $1 billion in student debt discharges and winning changes to federal law. Some of the original debt strikers appear in “You Are Not a Loan.” Their ranks have since grown. On January 20, the day of Biden’s inauguration, 100 student debtors declared themselves on strike. The Biden Jubilee 100, as they call themselves, demand that all $1.7 trillion of student debt be canceled within the Biden administration’s first 100 days. They come from all over the country and represent all walks of life. They are educators, doctors, graphic designers, gig workers, and even a pastor. What they have in common is that they can’t — and won’t — pay their student loans. Biden has the power to cancel all federal student debt with a signature. Congress long ago granted the executive branch the authority to do so. A movement is building to make him act. This film reveals how we got to the point and, hopefully, helps illuminate the possibilities that still lie ahead. - Astra Taylor @astradisastra https://debtcollective.org/

0 Comments

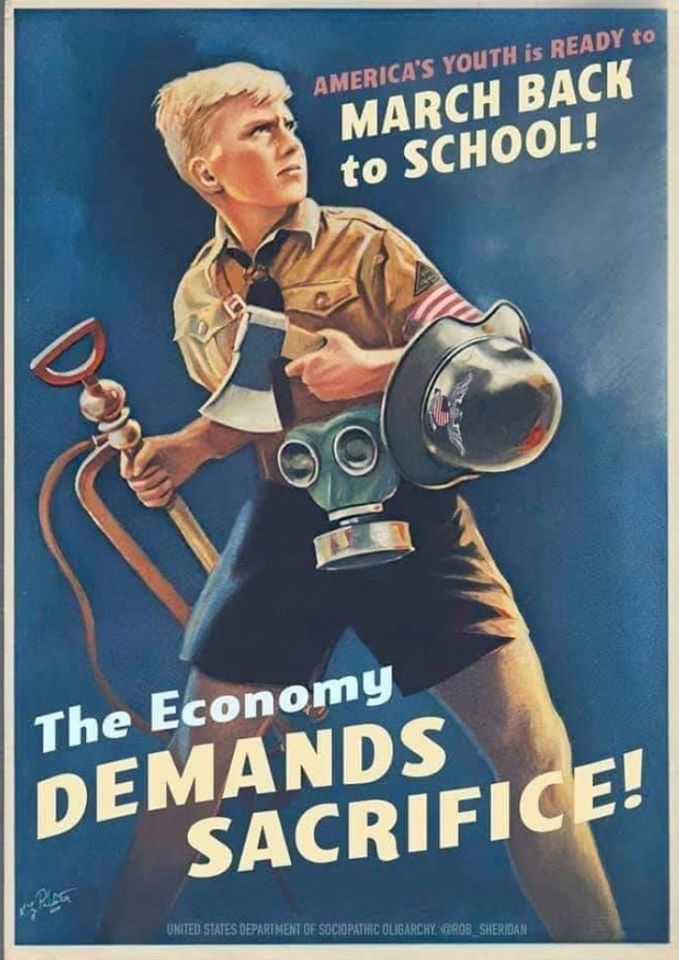

Most California schools are preparing for a new reality of entirely remote classes this fall, after Gov. Gavin Newsom last week announced that schools cannot offer in-person instruction if they are in counties the state is closely monitoring for coronavirus spread.

That means it is back to the drawing board for the many districts that were previously planning on offering a variety of options to students and parents, ranging from in-person classes and online instruction to hybrid approaches that involve a blend of both. Distance learning “is a challenge in any experience,” Newsom said in his daily briefing on Wednesday. In-person instruction would be far preferable, he said. “Our number one desire is to get our schools back open, in person, with high quality social emotional learning, not just academic learning, that is essential to the development of our children.” But keeping schools closed for in-person instruction in counties on the state’s Covid-19 monitoring list is necessary to reduce infection rates in California, he said. District officials interviewed by EdSource said they felt relieved to now have a clearer set of expectations from the state about when and how to bring students back to campus. At the same time, they said the future is still uncertain, and they are trying to be prepared for to make further pivots should they be necessary. And despite the greater certainty Newsom has introduced into the school fall landscape, cases of Covid-19 are now increasing in California, and teachers and administrators are also preparing for more changes that could come at any point, depending on the course of the pandemic in the coming weeks and months. Distance learning was unsuccessful for many students in the rapid pivot to distance learning last spring, and now state education officials are looking into ways to help districts make remote learning more effective this fall. On Thursday, California State Superintendent of Public Instruction Tony Thurmond announced in a webinar that districts can now apply for $5.3 billion in available funds to assist with improving distance learning and mitigating learning loss. For now, planning blueprints for hybrid and in-person instruction models that districts have been working on intensively for weeks or months are on hold as school officials in the more than 30 counties on the state’s monitoring list refocus their sights on distance learning. That is the case in Sanger Unified in Fresno County, one of the counties being monitored by the state. “In-person, face-to-face instruction we believe is the best for our students, and we thought we could pull it off, especially in elementary with social distancing,” said Tim Lopez, associate superintendent of educational services in Sanger Unified. “But we know it’s important to limit the community spread, so we want to be a part of that.” Last month, in response to the clear need to offer improved distance learning after its rushed rollout in the spring, California legislators in June passed the state’s 2020-21 budget bill with detailed requirements regarding remote learning. School districts have to implement the provisions of the bill in order to receive funding from the state. As a result of Gov. Newsom’s order last week, the requirements are getting closer scrutiny. Among others, school officials have to confirm that students have access to the necessary technology to participate in online classes, take attendance, monitor weekly progress and ensure teachers interact daily with students. Until last week, parents in Sanger Unified had the opportunity to choose between four different options for returning to school: completely in-person instruction, a fully online curriculum, a hybrid schedule, or a homeschooling option where students work with their parents and also receive support from teachers via a district-run charter school. But now, all students will start the year off with distance learning. That will include daily live online lessons with teachers, Lopez said, as well as offering regular office hours when students can reach teachers for additional support. “Especially at the lower grades, teachers found we need to have timely and constant feedback from students,” Lopez said. He said all the work the district has done in drawing up other instructional models will not be wasted. Having a list of options already on hand could help the district pivot to in-person or online with short notice, Lopez said. The district’s original fully online option will now be extended to all students. When health conditions improve, the plan is to give parents the option to have students return to school part-time or remain with full distance learning. Some researchers worry that districts that were focused on offering hybrid instruction — with some classes offered in person, and others remotely — they may not have put in the necessary time to set up a high quality distance learning program. “We are pretty concerned that this move to virtual doesn’t have an equal amount of planning behind it,” Robin Lake, director of the Center of Reinventing Public Education at the University of Washington, said during an Education Writers Association panel discussion on Thursday. In fact, some districts, like Corona-Norco Unified in Riverside County, were hoping all their students would return for in-person instruction in August. Earlier this summer, the district crafted a detailed plan for how students and staff could practice social distancing on campus and implement guidelines for masks and sanitation requirements when schools reopened. But as the number of coronavirus cases climbed in Riverside County, which is on the state’s monitoring list, Superintendent Michael Lin said it became clear that a full return would not be possible. Now the district is now planning to start the school year off on Aug. 11 with all students learning remotely, “We figured back in June that we would have time by August to implement safety measures to bring students back,” said Lin. “The key is to return as safely as possible, it’s not about returning as quickly as possible.” Despite the changes his district has had to make, Lin said he was relieved by the governor’s order on Friday because it sets clear standards and expectations across the state. “For me, that’s the right decision. We all want to return, but we can’t do it if it’s not safe,” Lin said. “If teachers don’t feel safe, what kind of teaching will we have?” Distance learning proved to be ineffective for many students last spring when schools closed suddenly for in-person instruction, and now some districts are seeking to come up with creative solutions to ensure greater success for students in an only-online environment. San Francisco Unified, for example, is planning to open up 40 in-person “learning hubs,” or spaces where students can go in-person to access digital coursework and receive help. The program will prioritize low-income families, foster children and other students in a difficult situation for remote learning, the San Francisco Chronicle reported on Thursday. Many districts are now re-creating plans while recognizing that health conditions and state and local requirements and guidelines could change any day. San Ramon Valley Unified near Walnut Creek in the East Bay voted to approve a hybrid learning model on June 14. But less than 36 hours later, the district received notice about the upcoming announcement from Newsom about school closures and began to re-evaluate its plans. The school district is located in Contra Costa County, which is on the state’s monitoring list. At the same time, the district heard from its local public health authority that contact tracing of the spread of the disease in the district would be a challenge, according to Chris George, the district’s director of communications. By June 16, just two days after deciding upon a hybrid model, and even before Newsom formally made his announcement, the board reversed its earlier decision and voted to begin the school year with distance learning. “We all share the goal of getting kids back into the classroom as soon as it’s safe,” George said. “As we’ve seen since the beginning of March, this means we must all prepare for change.” Newsom echoed similar feelings of frustration — along with acceptance of the new remote learning reality that it appears most districts across the state will have to embrace this fall. “Clearly we have a lot of work to do for more rigorous distance learning,” he said. “Students, staff and parents all prefer in-classroom instruction, but only if it can be done safely.” To get more reports like this one, click here to sign up for EdSource’s no-cost daily email on latest developments in education. edsource.org University of Pennsylvania and the United Nations Children’s Fund (UNICEF) are jointly launching a free massive open online course on social norms and social change. The complete course, which consists of one theoretical and one practical part á 4 weeks coursework, is taught entirely in English. Students who already attended previous sessions of the course rated the first part of the course with 4.5 and the second part of the course with 5.0 out of 5.0 possible points.

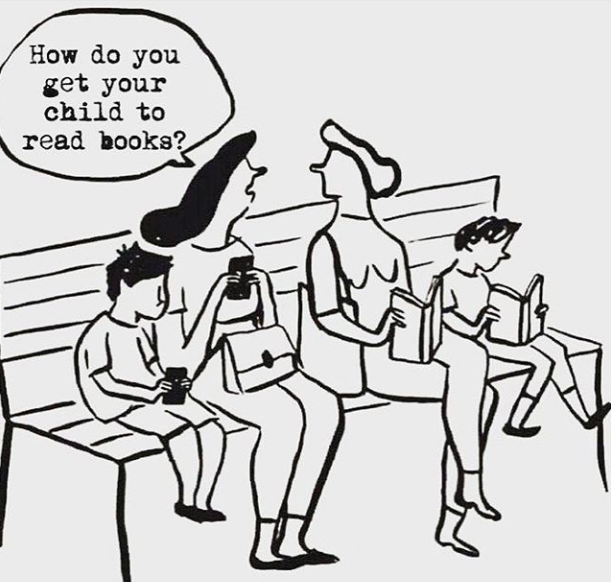

The first course will teach students how to make the distinction between social norms and social constructs, like customs or conventions. These distinctions are crucial for effective policy interventions aimed to create new, beneficial norms or eliminate harmful ones. The course teaches how to measure social norms and the expectations that support them, and how to decide whether they cause specific behaviors. The course discusses several issues that are closely related to human rights such as child marriage, gender violence and sanitation practices. The second part of the course will examine social change, the tools that might be used to enact change and put into practice everything that has been learnt in the first part of the course. While both course parts are available for free, those who want to receive a Penn-UNICEF certification can opt-in for a paid verified certificate. Enroll now (Part 1) Enroll now (Part 2) autonomy.work 25-32 minutes This article will discuss the role of education as it is currently viewed and why this current understanding is both undesirable and harmful. I will then propose how education ought to be understood, arguing that an approach that focusses on the development of agency is preferable to an approach that focusses on employability. I hope to demonstrate the first, and least controversial, aim by making recourse to government documents, speeches given by various education secretaries, and intuition mongering from the various pupils I have had the fortune (often good) to teach. I will then challenge, on two fronts, the view that education ought to be concerned with ensuring those who undertake this process are able to service labour-needs. Firstly, I will argue that education cannot satisfy this condition in virtue of the rapidly changing skills required by an increasingly dynamic, hostile, and unpredictable labour-market. Secondly, I will argue that even if education could satisfy this condition, it shouldn’t attempt to do so. The concern is that in virtue of education’s perception-forming effect, an agents’ weighing of worth (or value, broadly construed) along instrumental lines (i.e. a thing is of value if and only if it is of service to businesses, employers, markets, or capitalism generally) will lead to impoverished agents qua valuers. These concerns can be distilled into two distinct categories of harm: 1) Young people cannot be prepared for work, when the nature of this work is in a state of radical change. Indeed, it’s not obvious what work will look like (given automation and the possibility of a reduced working week) in the near future. 2) Young people will perceive themselves as, and measure their worth by, instrument. This self-perception, and perception of how to value people, frustrates their understanding of what their conception of a ‘good life’ looks like. After the critical, that is, negative, aspect of the article is expressed, I will suggest a few ways we can ameliorate the concerns raised. What has education been for? It will be uncontroversial to state that the current language around education policy has been oriented towards employment. Schools and universities are considered successful to the degree they enable those who pass through their doors to go on and find a job. This success condition is seemingly a constitutive feature of education policy and finds its way, unchallenged, into speeches given by every education secretary in living memory, initially as politically attuned rhetoric: “We should judge our success not just on how young people do in school, university or college, but how well their education prepares them for work, and how adults in the workforce have opportunities to progress.” (Department for Education and Justine Greening MP, 2017, italics added) then reaffirmed as policy: “The Higher Education Statistics Agency (HESA) publishes performance indicators (PIs) for higher education in 3 batches each year, on behalf of the 4 UK funding bodies. […]” These refer to: – retention of higher education students (students who do not progress to the second year of their courses) – employment outcomes of leavers (Department for Education and HESA, 2018, italics added) Almost every policy published in recent years has included some proviso about the need for young people to cultivate skills that are of value to employers. Most recently, this has taken on the language of ‘character’, presumably to begin the move of tying notions of a ‘moral’ education to discussions around social economics (an agent is good to the degree they are of benefit to the wider economy): “The DfE understands character education to include any activities that aim to develop desirable character traits or attributes in children and young people. The DfE believe that such desirable character traits: – Can support improved academic attainment; – Are valued by employers; and – Can enable children to make a positive contribution to British society” (NatSen Social Research & The National Children’s Bureau Research and Policy Team, 2017, italics added) These intuitions around what an education is, do not find themselves confined to those who are in control of policy. A cursory conversation with some secondary school pupils (Year 7 – Year 9) reveal that they, too, share these base suppositions about the role of education. When asked the question ‘what is school for?’ – 203 out of 206 pupils agreed that they came to school so they would be able to get a job. A fortiori, informal conversations with various members of my school’s Senior Leadership Team (SLT) frequently express an intuited presupposition that their job is to ensure that the school is taking demonstrable measures to satisfy the government’s success condition. That is, the school is trying to promote the pupils employability. The school has been told to perceive itself as successful to the degree our pupils are employable upon leaving, and now this perception has become the very presupposition of the school management. These views find similar expression in parents who, understandably, want to ensure their children are adequately prepared to find better employment than they themselves had access to. Two questions immediately emerge from this: 1. If schools are solely directed towards the benefit of business to such a degree that their success is measured in such terms, why is the state responsible for funding education, especially when this education has now taken the form of ‘vocational training’? Which is to say, why are businesses not funding schools directly? 2. If we are now looking forward to the reality of a disrupted and deteriorating labour market, what are our schools preparing pupils for? The first question will not be given any sort of separate or sustained treatment. Nor will I explicitly discuss the possibility of post-work scenarios (related to, but distinct from, point 2.) – Keynes’ vision of a 15hr working week, and the reasons this has not come about, have been given far better treatments than I could manage (see Keynes, Foucault, and the Disciplinary Complex (Guizzo & Stronge, 2018) as an exemplar of such a treatment). The Future of Work This section will examine the following argument: 1) We harm our young people by denying them an education. 2) Education is concerned only with cultivating a skillset valued by employers. 3) We cannot offer pupils an education that employers of the (near) future will value. 4) We cannot but harm our young people. Premise 1. is our starting assumption and – taking this as fairly uncontroversial – I will not offer an argument for it here. Premise 2. is an evaluative statement of our current understanding (as expressed by pupils and government policy). Premise 3. is a descriptive statement which I hope to qualify. Our conclusion (4.) is reached, given the truth of 1-3. If I can show premise 3. is correct, and that this is in tension with 2., I hope to show that premise 2. is the underlying assumption that is causing the harm mentioned in 4. My argument for the above statement will centre around the claim that the rapidly changing nature of work makes it almost impossible to predict, and provide policy for, what sort of skills need to service it. Two, skills-based, concerns are raised. Firstly, job polarization (a hollowing out of middle-quality jobs). Secondly, overskilling (a dramatic increase in the graduate workforce, which has led to ‘credentialism’ in the labour market). Job polarization is when job quality (partially defined by the median wage) tends towards two poles (one pole being ‘high quality’, the other being ‘low quality’). Where the distribution of job quality used to benefit from a spread range (top, middle, bottom), economists have observed an increasing, and (importantly) rapidly increasing, ‘hollowing out’ of middle-quality jobs, leaving only high and low quality jobs available for the workforce. Goos and Manning (Goos & Manning, 2007) examine increasing levels of job polarization in the U.K. since 1975, and provide evidence for the claim (following Autor, Levy, Murnane (Autor, et al., 2003) )that the ‘routinization’ of labour – specifically, labour that was once done by skilled workers capable of high-precision work (bookkeepers, for example) – has had an obliterating effect on middle-quality jobs (most obviously caused by the adoption of technology, which is extremely suited to routine, algorithmic, rule-based work). Workers are, instead, offered only the choice of ‘lousy’ (low-quality) or ‘lovely’ (high-quality) jobs. Upon leaving tertiary education, graduates now scramble for the ‘lovely’ jobs. This is, of course, zero-sum (a person gains at the expense of another) and we are now noticing increasing underemployment – most frequently in virtue of the, now notorious, zero-hour contracts, and the rise of gig-economy based jobs – amongst graduates. An obvious problem with graduate saturation in the job market is the resultant ‘credentialism’ that emerges. Employers are now asking for graduate-level credentials (hence, ‘credentialism’) to fill roles that – ultimately – do not require this level of education to perform competently. This is having an enormous detrimental effect: making low-skilled, low-paid, casual, temporary, or gig-based work the preserve of those with a degree (or higher), means those who would normally be reliant on these job opportunities (i.e. non-graduates) are being passed-over without consideration. Non-graduates have as much to offer these employers (given that graduates don’t have a relevant skillset), but are deemed to be less attractive prospects given assumptions related to cultural-capital accumulation. Interestingly, this increase in educational attainment required by employers has not led to an increase in wages for those doing these jobs. Job polarization has created a compound negative effect. Firstly, graduates are taking jobs not relevant to the subjects they studied at university, often for less money than they were expecting. The skills they have spent years (and, indeed, money) developing are now – if we are to assume the terms of the education policy-narrative – completely mismatched and redundant. Research has found that over 50% of graduates go into jobs that do not require a degree. One reporter in The Guardian makes the claim that pursuing a tertiary education has, therefore, been an utter waste of time for these graduates: “Britain’s failure to create sufficient high-skilled jobs for its rising proportion of graduates means the money invested in education is being squandered, while young people are left crippled by student debts[…]” (Allen, 2015) The language around this problem is telling. ‘Money invested in education’, ‘failure to create high-skilled jobs’, and ‘squandered’ – all presuppose that the purpose of education is to gain employment. If you are only obtaining a lousy job, then you have wasted your money – and your time – by going to university. You have bought a defective product, a product that did not perform the function (i.e. access to a ‘lovely’ job) you bought it do. CIPD Chief Executive, Peter Cheese, is quoted in The Guardian saying: “Graduates are increasingly finding themselves in roles which don’t meet their career expectations, while they also find themselves saddled with high levels of debt. This ‘graduatization’ of the labour market also has negative consequences for non-graduates, who find themselves being overlooked for jobs just because they have not got a degree, even if a degree is not needed to do the job.” “Finally, this situation is also bad for employers and the economy as this type of qualification and skills mismatch is associated with lower levels of employee engagement and loyalty, and will undermine attempts to boost productivity.” (The Guardian, 2016) So, why are graduates taking these jobs in the first place? The answer is related to the problem education policy-makers have… the sort of jobs available are changing in nature, and changing too quickly to make prudential judgments on. There has been a long and steady decline of middle-quality job availability. What we have seen, instead, is a hollowing-out and polarizing process. Very few highly paid jobs, requiring an increasingly specialized skillset, are being created, however, there are is an abundance of increasingly mundane, poorly paid (but non-routine and less automatable) jobs becoming available. The sort of middle-income jobs that used to be the preserve of graduates leaving Russell Group universities with solid 2:1 degrees are vanishing, forcing graduates to take jobs an education is simply not required for. This isn’t simply a case of over-qualification (although it is often that), it’s a case of irrelevant education. The job market is readjusting too quickly, too rapidly, and moving in a direction that (formal) education simply isn’t in a position to service. Providing our young people with skills required to be successful in the job market – and using this as our success condition – is simply something that education is not for. Peripheral to this concern is the real prospect of the full-automation of labour. The problem of job polarization is only likely to worsen with automation beginning to take root within the ‘lousy’ job sector. The low-skilled jobs graduates have taken from non-graduates are increasingly falling to rapidly developing innovations in technology, and even graduates cannot – even in principle – satisfy the desirability of robots (which can work without break, without the possibility of unforeseen sick days, without pay, without irregularity in output, without workplace disputes, and inter-employee tensions including bullying, and sexual-harassment lawsuits). A recent report produced by the World Economics Forum, citing research produced by the McKinsey Global Institute, claims “robots could replace 800 million jobs by 2030” (World Economic Forum, 2018). Any job that involves repetitive operations are most clearly under threat, while the genuine prospect of ‘machine learning’, and machine problem-solving (via networked information sharing between robots) is making it unclear which jobs – including those that are not based on quasi-algorithmic behaviours – will resist automation. We cannot offer pupils an education that employers of the (near) future will value, because it is not obvious what jobs will resist full-automation the longest, and it is definitely not obvious if employers of the near future will value non-robot workers at all. If the role of education is provide people with the means to gain employment, and if there are no such means available, then it appears to follow (given the above argument), that we have no real reason to educate our young people. Our assumed teleology of education, viz. ‘employment’, cannot be met under the current conditions of the New Industrial Revolution. Philistine Formation We have two good reasons to re-evaluate education’s function. The first is that our second premise cannot obtain in virtue of the considerations discussed in the previous section. The second reason to re-evaluate education’s function is to assess what kind of agent is produced by putting them through a formative, and value-formative, process that encourages them to think of subjects of enquiry along instrumental lines: ‘Physics is worth studying if, and only if, the study of physics (and the resultant qualification) is more likely to help me get a job than studying another subject in its place.’ And this is the model of thought expressed by many of the pupils I teach. Further, this is a model of thought actively encouraged since the adoption of the new ‘silo’ (aka ‘bucket’) system brought in to ‘weigh’ GCSEs in England and Wales. Pupils habituate and, intuit, the thought that x is valuable to learn only if it improves one’s job prospects. Everything else is – literally – a waste of time and therefore ‘pointless’ (where this is considered a pejorative term). If all goods are qualified by ‘employability’, and no good is considered such in itself, this is nothing other than total and universal philistinism. Education, as it is currently structured, habituates and encourages value monopoly (where the value is ‘employability’) and, as such, generates a workplace populated by agents with an impoverished sense of value, and consequently an impoverished sense of self. A Different Model Of Education I want to begin by saying that the model of education I wish to propose is valuable in itself – that is, it’s value is not parasitic on the possibility (or inevitability) of full automation. As such, this model of thinking about education should be implemented regardless of one’s vision of the future. In this section I wish to propose a model of education that draws on certain Aristotelian/Hegelian approaches to claim that education, rather than being concerned with cultivating ‘workers’, should instead be oriented around cultivating ‘agents’ or ‘people’. This education-type, I will argue, should furnish pupils with a sense of what it is to be a person, how to decide between the various projects one can participate in in virtue of this person-ality, and give pupils the skills necessary to undertake a (perhaps rudimentary) engagement with formative projects. Being able to form a motivating conception of ‘the good’, and understanding the processes one has to undertake to participate in one’s preferred conception of ‘a good life’ (whatever such a life looks like for the agent who esteems it), ought to be facilitated by an education. Indeed, I want to argue that education should be organised around developing these skills. I will animate my argument by making recourse to the meta-ethical view ‘Constitutivism’. Hegelian constitutivism (my preferred variant) claims that to be a person is to be a normative type. This view argues that each action has a constitutive feature that can be differentially realised. For Hegel, this feature is ‘freedom’. To be a ‘person’ is to be ‘free’ (a person isn’t a person if they are not free). Good agents are those who express and realise their (inherent) freedom, bad agents are those who fail to express, or realise, their freedom sufficiently well. Hegel’s argument here rests on views about the substantial structure of agency that I have argued for elsewhere, and I will take it as given that this view is granted (for the sake of argument). Intuitively, we can only express ourselves in the world if we have developed a skill-set of sorts. The more skilled we are, the more clearly we are able to express ourselves. I can convey meaning using sentences. The better command of the language I have, and the more effective a communicator I am, the more closely I can express my meaning (by drawing on a larger pool of words, by understanding my listener etc). Those with artistic skills are more able to express, in precise ways, clear internal content. They are better able to externalise (in the world), what was hitherto internal (in the mind). We can use these skills in a variety of ways. Firstly, we can use our skills to produce goods we need for our subsistence and (perhaps) luxury. Given that we need others to also have developed a skill-set in order to produce the things that we ourselves cannot produce, we might have a normative reason to not only seek out an education for ourselves, we will also have a (prudential) normative reason to ensure that there is access to education for all. Secondly, we can use our skills to express ourselves to our friends, colleagues and wider communities. To be an agent is to be free, and a large part of this freedom is going to be determined by how well an agent can express themselves in the world. Being able to express yourself, and having ‘yourself’ recognised by someone you respect (broadly speaking) are both vital presuppositions for our conception of a free agent. Education teaches us how to express, and, if done well, education also teaches us how to recognise the inalienable agency of an ‘other’. If I recognise a person (in the full sense) when I see one, and don’t mistake them for something simply person-shaped (as, for example, what happened in situations of slavery), I might treat them as something other than a mere object. Thus, education is a good in itself in that it is conducive to agency and, therefore, freedom. It plays a significant part in facilitating agential flourishing through cultivating patterns of reciprocal recognition. If I am told I play chess well by a person whose game is weak and under-developed, I might not take this person’s praise seriously. If I am told I play chess well by Garry Kasparov (or some such), I will do well to not blush with pride. Similarly, my dignity, and my recognition of my own dignity, is supported if it is recognised by an ‘other’ who I also recognise as having dignity. Indeed, my dignity is parasitic on this recognition and reciprocal recognition between equals is vital. If I recognise as persons those in the LGBTQ+ community, the BAME community, the disabled community (etc), via their expressions of agency (without these expressions they remain only shapes in the world), I am more likely to receive satisfaction when I am recognised in turn. Education has, Hegel claims, ‘infinite value’ for exactly this reason. It will be of infinite value to those who have it, and to others also – it helps us to see the humanity of all, not only those who look like us and believe the things we do: “A human being counts as such because he is a human being, not because he is a Jew, Catholic, Protestant, German, Italian, etc. This consciousness, which is the aim of thought, is of infinite importance […]” (PR §209R:240) In short, education is a process that teaches us what it is to be a person, and how to engage in the project of becoming a person. Education does not make ‘subjects’ (in the sense of fashioning a finished thing), it makes ‘projects’. It cultivates – for those who participate in it – a robust conception of ‘the good’, and enables us to recognise the various ways we can express our self-interpretation. Further, it teaches us the form of personality so that we can recognise it when we see it. If we are able to recognise persons, and understand what is entailed by this recognition, we shall have a developed sense of how to behave towards, that is, how to treat, others, and how we, ourselves, can expect to be treated. What This Means For Policy So, what subjects should our young people learn in schools? Fortunately, the burden of answering this question does not befall the author of this article. This article is meta-educational. Rather, the concrete conclusion arising from the prior argument centres around the claim that the way in which we weigh subjects must be re-valued alongside the intentionality behind subject choice preferences. I also want to restate the importance of de-tethering narratives around values and employment. This de-tethering will habituate more value pluralism in a way that defeats the worries around philistinism. Goods other than (but, perhaps including – if we are to accept a weaker view of this claim) ‘employability’ will be esteemed. Altering the language around education seems trivial, that is until we realise how totally, and universally, we have adopted the unitary value inherent to servicing-capitalism. Two concrete proposals which could be put forth, and perhaps considered by policy makers, might – in the first instance – include: 1) Removing (not just reducing) all tuition fees. Making education adopt a commodity form confuses ‘users’ of higher education about the nature of thing they’re participating in. De-commodifying education will be a good starting point for challenging narratives that aligns ‘education’ with ‘product’. Further, education is a right, not a privilege, or something you should only participate in if you have the money to do so (or are prepared to take-on an enormous debt – usually framed as a gamble on one’s future). It’s not obvious what you’re buying when you buy education (where education is taken to be conducive to one’s character development in itself). Indeed, even taking the current policy-narrative, making a person buy their own employability seems perverse. 2) Re-invigorating Further Education. Ensuring the material conditions are present for allowing agents to develop new competencies and, therefore, express their person-ality in new ways will be paramount. Quasi-informal courses, where one might pick up a language, learn to build a car, learn various crafts, etc, have no obvious teleology outside of ‘I found x interesting and wanted to know more about it’. People value this education because it helps them participate in a life they consider worthwhile. References Allen, K., 2015. UK Graduates are Wasting Degrees in Lower-Skilled Jobs. [Online] Available at: (https://www.theguardian.com/business/2015/aug/19/uk-failed-create-enough-high-skilled-jobs-graduates-student-debt-report) [Accessed 2nd April 2018]. Autor, D. H., Levy, F. & Murnane, R. J., 2003. The Skill Content of Recent Technological Change: An Empirical Exploration. Quarterly Journal of Economics, Volume 1333, p. 1279. Department for Education and HESA, 2018. Performance Indicators: Widening participation 2016 2017. [Online] Available at: https://www.gov.uk/government/statistics/performance-indicators-widening-participation-2016-to-2017 [Accessed 26 March 2018]. Department for Education and Justine Greening MP, 2017. Justine Greening: Education at the core of social mobility. [Online] Available at: https://www.gov.uk/government/speeches/justine-greening-education-at-the-core-of-social-mobility [Accessed 28th March 2018]. Goos, M. & Manning, A., 2007. Lousy and Lovely Jobs: The Rising Polarization of Work in Britain. The Review of Economics and Statistics, 89(1), pp. 118 – 133. Guizzo, D. a. S. W., 2018. Keynes, Foucault, and the ‘Disciplinary Complex’: A Contribution to the Analysis of Work. Autonomy, 1(1), pp. 1 – 18. NatSen Social Research & The National Children’s Bureau Research and Policy Team, 2017. Developing character skills in schools. [Online] Available at: (https://www.gov.uk/government/uploads/system/uploads/attachment_data/file/634710/Developing_Character_skills-synthesis_report.pdf [Accessed 20th March 2018]. The Guardian, 2016. Huge Increase in Number of Graduates ‘bad for UK economy’. [Online] Available at: (https://www.theguardian.com/money/2016/oct/11/huge-increase-in-number-of-graduates-bad-for-uk-economy) [Accessed 2nd April 2018]. World Economic Forum, 2018. The future of education, according to experts at Davos. [Online] Available at: https://www.weforum.org/agenda/2018/01/top-quotes-from-davos-on-the-future-of-education/ [Accessed 2nd April 2018]. By Amanda Ruggeri 2nd April 2019 A

At university, when I told people I was studying for a history degree, the response was almost always the same: “You want to be a teacher?”. No, a journalist. “Oh. But you’re not majoring in communications?” In the days when a university education was the purview of a privileged few, perhaps there wasn’t the assumption that a degree had to be a springboard directly into a career. Those days are long gone. Today, a degree is all but a necessity for the job market, one that more than halves your chances of being unemployed. Still, that alone is no guarantee of a job – and yet we’re paying more and more for one. In the US, room, board and tuition at a private university costs an average of $48,510 a year; in the UK, tuition fees alone are £9,250 ($12,000) per year for home students; in Singapore, four years at a private university can cost up to SGD$69,336 (US$51,000). Learning for the sake of learning is a beautiful thing. But given those costs, it’s no wonder that most of us need our degrees to pay off in a more concrete way. Broadly, they already do: in the US, for example, a bachelor’s degree holder earns $461 more each week than someone who never attended a university. You might also like: • The faster way to learn a new language • How to teach a distracted generation • The exam that brings a whole country to a standstill But most of us want to maximise that investment – and that can lead to a plug-and-play type of approach to higher education. Want to be a journalist? Study journalism, we’re told. A lawyer? Pursue pre-law. Not totally sure? Go into Stem (science, technology, engineering and maths) – that way, you can become an engineer or IT specialist. And no matter what you do, forget the liberal arts – non-vocational degrees that include natural and social sciences, mathematics and the humanities, such as history, philosophy and languages. The benefit of a humanities degree is the emphasis it puts on teaching students to think, critique and persuade (Credit: BBC/Getty) This has been echoed by statements and policies around the world. In the US, politicians from Senator Marco Rubio to former President Barack Obama have made the humanities a punch line. (Obama later apologised). In China, the government has unveiled plans to turn 42 universities into “world class” institutions of science and technology. In the UK, government focus on Stem has led to a nearly 20% drop in students taking A-levels in English and a 15% decline in the arts. But there’s a problem with this approach. And it’s not just that we’re losing out on crucial ways to understand and improve both the world and ourselves – including enhancing personal wellbeing, sparking innovation and helping create tolerance, among other values. It’s also that our assumptions about the market value of certain degrees – and the “worthlessness” of others – might be off. At best, that could be making some students unnecessarily stressed. At worst? Pushing people onto paths that set them up for less fulfilling lives. It also perpetuates the stereotype of liberal arts graduates, in particular, as an elite caste – something that can discourage underprivileged students, and anyone else who needs an immediate return on their university investment, from pursuing potentially rewarding disciplines. (Though, of course, this is hardly the only diversity problem such disciplines have). Soft skills, critical thinking George Anders is convinced we have the humanities in particular all wrong. When he was a technology reporter for Forbes from 2012 to 2016, he says Silicon Valley “was consumed with this idea that there was no education but Stem education”. But when he talked to hiring managers at the biggest tech companies, he found a different reality. “Uber was picking up psychology majors to deal with unhappy riders and drivers. Opentable was hiring English majors to bring data to restauranteurs to get them excited about what data could do for their restaurants,” he says. “I realised that the ability to communicate and get along with people, and understand what’s on other people’s minds, and do full-strength critical thinking – all of these things were valued and appreciated by everyone as important job skills, except the media.” This realisation led him to write his appropriately-titled book You Can Do Anything: The Surprising Power of a “Useless” Liberal Arts Education. For many students future earnings have become a 'litmus test' for deciding between different universities and subjects to specialise in (Credit: Jopwell Collection) Take a look at the skills employers say they’re after. LinkedIn’s research on the most sought-after job skills by employers for 2019 found that the three most-wanted “soft skills” were creativity, persuasion and collaboration, while one of the five top “hard skills” was people management. A full 56% of UK employers surveyed said their staff lacked essential teamwork skills and 46% thought it was a problem that their employees struggled with handling feelings, whether theirs or others’. It’s not just UK employers: one 2017 study found that the fastest-growing jobs in the US in the last 30 years have almost all specifically required a high level of social skills. Or take it directly from two top executives at tech giant Microsoft who wrote recently: "As computers behave more like humans, the social sciences and humanities will become even more important. Languages, art, history, economics, ethics, philosophy, psychology and human development courses can teach critical, philosophical and ethics-based skills that will be instrumental in the development and management of AI solutions. Of course, it goes without saying that you can be an excellent communicator and critical thinker without a liberal arts degree. And any good university education, not just one in English or psychology, should sharpen these abilities further. “Any degree will give you very important generic skills like being able to write, being able to present an argument, research, problem-solve, teamwork, becoming familiar with technology,” says Dublin-based educational consultant and career coach Anne Mangan. But few courses of study are quite as heavy on reading, writing, speaking and critical thinking as the liberal arts, in particular the humanities – whether that’s by debating other students in a seminar, writing a thesis paper or analysing poetry. Empathy is usually the biggest skill. That doesn’t just mean feeling sorry for people with problems. It means an ability to understand the needs and wants of a diverse group of people – AndersWhen asked to drill the most job market-ready skills of a humanities graduate down to three, Anders doesn’t hesitate. “Creativity, curiosity and empathy,” he says. “Empathy is usually the biggest one. That doesn’t just mean feeling sorry for people with problems. It means an ability to understand the needs and wants of a diverse group of people. “Think of people who oversee clinical drug tests. You need to get doctors, nurses, regulators all on the same page. You have to have the ability to think about what’s going to get this 72-year-old woman to feel comfortable being tracked long term, what do we have to do so this researcher takes this study seriously. That’s an empathy job.” But in general, say Anders and others, the benefit of a humanities degree is the emphasis it puts on teaching students to think, critique and persuade – often in the grey areas where there isn’t much data available or you need to work out what to believe. The biggest group of US humanities graduates, 15%, go on to management positions It’s small wonder, therefore, that humanities graduates go on to a variety of fields. The biggest group of US humanities graduates, 15%, go on to management positions. That’s followed by 14% who are in in office and administrative positions, 13% who are in sales and another 12% who are in education, mostly teaching. Another 10% are in business and finance. And while there’s often an assumption that the careers humanities graduates pursue just aren’t as good as the jobs snapped up by, say, engineers or medics, that isn’t the case. In Australia, for example, three of the 10 fastest-growing occupations are sales assistants, clerks, and advertising, public relations and sales managers – all of which might look familiar as fields that humanities graduates tend to pursue. Tuition fees are £9,250 ($12,000) per year for UK home students; in Singapore, four years at a private university can cost up to SGD$69,336 (US$51,000) (Credit: BBC/Getty) Meanwhile, Glassdoor’s 2019 research found that eight of the top 10 best jobs in the UK were managerial positions – people-oriented roles that require communication skills and emotional intelligence. (It defined "best" by combining earning potential, overall job satisfaction rating and number of job openings.) And many of them were outside Stem-based industries. The third best job was marketing manager; fourth, product manager; fifth, sales manager. An engineering role doesn’t appear on the list until the 18th slot – below positions in communications, HR and project management. One recent study of 1,700 people from 30 countries, meanwhile, found that the majority of those in leadership positions had either a social sciences or humanities degree. That was especially true of leaders under 45 years of age; leaders over 45 were more likely to have studied Stem. Be career-ready This isn’t to say that a liberal arts degree is the easy road. “A lot of the people I talked to were five or 10 years into their career, and there was a sense that the first year was bumpy, and it took a while to find their footing,” Anders says. “But as things played out, it did tend to work.” For some graduates, the initial challenge was not knowing what they wanted to do with their lives. For others, it was not having acquired as many technical skills with their degree as, say, their IT trainee peers and having to play catch-up after. But pursuing a more vocational degree can come with its own risks too. Not every teenager knows exactly what they want to do with their lives, and our career aspirations often change over time. One UK report found that more than one-third of Brits have changed careers in their lifetime. LinkedIn found that 40% of professionals are interested in making a “career pivot” – and younger people are interested most of all. Focusing on broadly applicable skills like critical thinking no longer seems like such a moon shot when you consider how many different jobs and industries they can be applied to (though for a young person figuring out their career path, it’s true that flexibility also can feel overwhelming). One 2017 study found that the fastest-growing jobs in the US in the last 30 years have almost all specifically required a high level of social skillsSpecialised technical skills are important in the job market too. But there are a number of ways to acquire them. “I’m very pro-internships and apprenticeships. We’ve seen that that can directly correlate to you having a more grounded skill base in the workplace,” says career development coach Christina Georgalla. “I even advocate that post-university, if you’re not sure, take a year out and instead of going travelling, actually trial doing different internships. Even if it’s the same field but in TV, say, broadcasting versus producing versus presenting, so you can see the difference.” But what about the other perceived pitfalls – like a higher unemployment rate and lower salaries? The 'soft skills' most in demand from employers are creativity, persuasion and collaboration (Credit: BBC/Getty) Why broader matters It’s true that the humanities come with a higher risk of unemployment. But it’s worth noting that the risk is slighter than you’d imagine. For young people (aged 25-34) in the US, the unemployment rate of those with a humanities degree is 4%. An engineering or business degree comes with an unemployment rate of a little more than 3%. That single additional percentage point is one extra person per 100, such a small amount it’s often within the margin of error of many surveys. Salaries aren’t so straightforward either. Yes, in the UK, the top earnings are pulled in by those who study medicine or dentistry, economics or maths; in the US, engineering, physical sciences or business. Some of the most popular humanities, such as history or English, are in the bottom half of the group. But there’s more to the story – including that for some jobs, it seems that it’s actually better to start with a broader degree, rather than a professional one. Notable wage disparities persist in the humanities: US men who major in the humanities have median earnings of $60,000, for example, while women make $48,000Take law. In the US, an undergraduate student who took the seemingly most direct route to becoming a lawyer, judge or magistrate – majoring in a pre-law or legal studies degree – can expect to earn an average of $94,000 a year. But those who majored in philosophy or religious studies make an average of $110,000. Graduates who studied area, ethnic and civilisations studies earn $124,000, US history majors earn $143,000 and those who studied foreign languages earn $148,000, a stunning $54,000 a year above their pre-law counterparts. There are similar examples in other industries too. Take managers in the marketing, advertising and PR industries: those who majored in advertising and PR earn about $64,000 a year – but those who studied liberal arts make $84,000. And even while overall salary disparities do remain, it may not be the degree itself. Humanities graduates in particular are more likely to be female. We all know about the gender pay gap, and notable wage disparities persist in the humanities: US men who major in the humanities have median earnings of $60,000, for example, while women make $48,000. Since more than six in 10 humanities majors are women, the gender pay gap, not the degree, may be to blame. We also know that as more women move into a field, the field’s overall earnings go down. Given that, is it any wonder that English majors, seven in 10 of whom are women, tend to make less than engineers, eight in 10 of whom are men? Humanities courses include subjects like English literature, modern languages, history, and philosophy (Credit: BBC/Getty) Do what you love This is a big part of why there is one major takeaway, says Mangan. Whatever a student pursues in university, it must be something that they aren’t just good at, but they really enjoy. “In most areas that I can see, the employer just wants to know that you’ve been to college and you’ve done well. That’s why I think doing something that really interests you is essential – because that’s when you’re going to do well,” she says. No matter what, making a degree or career path decision based on average salaries isn’t a good move. “Financial success is not a good reason. It tends to be a very poor reason,” Mangan says. “Be successful at something and money will follow, as opposed to the other way around. Focus on doing the stuff that you love that you’ll be so enthusiastic about, people will want to give you a job. Then go and develop within that job.” This speaks to a broader point: the whole question of whether a student should choose Stem versus the humanities, or a vocational course versus a liberal arts degree, might be misguided to begin with. It’s not as if most of us have an equal amount of passion and aptitude for, say, accounting and art history. Plenty of people know what they love most. They just don’t know if they should pursue it. And the headlines most of us see don’t help. This is part of why parents and teachers often need to take a step back, Mangan says. “There is only one expert. I’m the expert on me, you’re the expert on you, they’re the expert on themselves,” she says. “And nobody, I really mean nobody, can tell them how to do what they should be doing.” Even, it seems, if that means pursuing a “useless” degree – like one in liberal arts. -- Amanda Ruggeri is a senior journalist and editor at BBC.com. You can follow her on Twitter at @amanda_ruggeri. There is a cult of ignorance in the United States, and there always has been. The strain of anti-intellectualism has been a constant thread winding its way through our political and cultural life, nurtured by the false notion that democracy means that 'my ignorance is just as good as your knowledge.'”—Isaac Asimov

In the early ’90s, a small group of “AIDS denialists,” including a University of California professor named Peter Duesberg, argued against virtually the entire medical establishment’s consensus that the human immunodeficiency virus (HIV) was the cause of Acquired Immune Deficiency Syndrome. Science thrives on such counterintuitive challenges, but there was no evidence for Duesberg’s beliefs, which turned out to be baseless. Once researchers found HIV, doctors and public health officials were able to save countless lives through measures aimed at preventing its transmission. The Duesberg business might have ended as just another quirky theory defeated by research. The history of science is littered with such dead ends. In this case, however, a discredited idea nonetheless managed to capture the attention of a national leader, with deadly results. Thabo Mbeki, then the president of South Africa, seized on the idea that AIDS was caused not by a virus but by other factors, such as malnourishment and poor health, and so he rejected offers of drugs and other forms of assistance to combat HIV infection in South Africa. By the mid-2000s, his government relented, but not before Mbeki’s fixation on AIDS denialism ended up costing, by the estimates of doctors at the Harvard School of Public Health, well over three hundred thousand lives and the births of some thirty-five thousand HIV-positive children whose infections could have been avoided. Mbeki, to this day, thinks he was on to something. Many Americans might scoff at this kind of ignorance, but they shouldn’t be too confident in their own abilities. In 2014, the Washington Post polled Americans about whether the United States should engage in military intervention in the wake of the 2014 Russian invasion of Ukraine. The United States and Russia are former Cold War adversaries, each armed with hundreds of long-range nuclear weapons. A military conflict in the center of Europe, right on the Russian border, carries a risk of igniting World War III, with potentially catastrophic consequences. And yet only one in six Americans—and fewer than one in four college graduates—could identify Ukraine on a map. Ukraine is the largest country entirely in Europe, but the median respondent was still off by about 1,800 miles. Map tests are easy to fail. Far more unsettling is that this lack of knowledge did not stop respondents from expressing fairly pointed views about the matter. Actually, this is an understatement: the public not only expressed strong views, but respondents actually showed enthusiasm for military intervention in Ukraine in direct proportion to their lack of knowledge about Ukraine. Put another way, people who thought Ukraine was located in Latin America or Australia were the most enthusiastic about the use of U.S. military force. These are dangerous times. Never have so many people had so much access to so much knowledge and yet have been so resistant to learning anything. In the United States and other developed nations, otherwise intelligent people denigrate intellectual achievement and reject the advice of experts. Not only do increasing numbers of lay people lack basic knowledge, they reject fundamental rules of evidence and refuse to learn how to make a logical argument. In doing so, they risk throwing away centuries of accumulated knowledge and undermining the practices and habits that allow us to develop new knowledge. This is more than a natural skepticism toward experts. I fear we are witnessing the death of the ideal of expertise itself, a Google-fueled, Wikipedia-based, blog-sodden collapse of any division between professionals and laypeople, students and teachers, knowers and wonderers—in other words, between those of any achievement in an area and those with none at all. Attacks on established knowledge and the subsequent rash of poor information in the general public are sometimes amusing. Sometimes they’re even hilarious. Late-night comedians have made a cottage industry of asking people questions that reveal their ignorance about their own strongly held ideas, their attachment to fads, and their unwillingness to admit their own cluelessness about current events. It’s mostly harmless when people emphatically say, for example, that they’re avoiding gluten and then have to admit that they have no idea what gluten is. And let’s face it: watching people confidently improvise opinions about ludicrous scenarios like whether “Margaret Thatcher’s absence at Coachella is beneficial in terms of North Korea’s decision to launch a nuclear weapon” never gets old. When life and death are involved, however, it’s a lot less funny. The antics of clownish anti-vaccine crusaders like actors Jim Carrey and Jenny McCarthy undeniably make for great television or for a fun afternoon of reading on Twitter. But when they and other uninformed celebrities and public figures seize on myths and misinformation about the dangers of vaccines, millions of people could once again be in serious danger from preventable afflictions like measles and whooping cough. The growth of this kind of stubborn ignorance in the midst of the Information Age cannot be explained away as merely the result of rank ignorance. Many of the people who campaign against established knowledge are otherwise adept and successful in their daily lives. In some ways, it is all worse than ignorance: it is unfounded arrogance, the outrage of an increasingly narcissistic culture that cannot endure even the slightest hint of inequality of any kind. By the “death of expertise,” I do not mean the death of actual expert abilities, the knowledge of specific things that sets some people apart from others in various areas. There will always be doctors and diplomats, lawyers and engineers, and many other specialists in various fields. On a day-to-day basis, the world cannot function without them. If we break a bone or get arrested, we call a doctor or a lawyer. When we travel, we take it for granted that the pilot knows how airplanes work. If we run into trouble overseas, we call a consular official who we assume will know what to do. Related in Arts and CultureThis, however, is a reliance on experts as technicians. It is not a dialogue between experts and the larger community, but the use of established knowledge as an off-the-shelf convenience as needed and only so far as desired. Stitch this cut in my leg, but don’t lecture me about my diet. (More than two-thirds of Americans are overweight.) Help me beat this tax problem, but don’t remind me that I should have a will. (Roughly half of Americans with children haven’t bothered to write one.) Keep my country safe, but don’t confuse me with the costs and calculations of national security. (Most U.S. citizens do not have even a remote idea of how much the United States spends on its armed forces.) All of these choices, from a nutritious diet to national defense, require a conversation between citizens and experts. Increasingly, it seems, citizens don’t want to have that conversation. For their part, they’d rather believe they’ve gained enough information to make those decisions on their own, insofar as they care about making any of those decisions at all. On the other hand, many experts, and particularly those in the academy, have abandoned their duty to engage with the public. They have retreated into jargon and irrelevance, preferring to interact with each other only. Meanwhile, the people holding the middle ground to whom we often refer as “public intellectuals”—I’d like to think I’m one of them—are becoming as frustrated and polarized as the rest of society. The death of expertise is not just a rejection of existing knowledge. It is fundamentally a rejection of science and dispassionate rationality, which are the foundations of modern civilization. It is a sign, as the art critic Robert Hughes once described late 20th century America, of “a polity obsessed with therapies and filled with distrust of formal politics,” chronically “skeptical of authority” and “prey to superstition.” We have come full circle from a premodern age, in which folk wisdom filled unavoidable gaps in human knowledge, through a period of rapid development based heavily on specialization and expertise, and now to a postindustrial, information-oriented world where all citizens believe themselves to be experts on everything. Any assertion of expertise from an actual expert, meanwhile, produces an explosion of anger from certain quarters of the American public, who immediately complain that such claims are nothing more than fallacious “appeals to authority,” sure signs of dreadful “elitism,” and an obvious effort to use credentials to stifle the dialogue required by a “real” democracy. Americans now believe that having equal rights in a political system also means that each person’s opinion about anything must be accepted as equal to anyone else’s. This is the credo of a fair number of people despite being obvious nonsense. It is a flat assertion of actual equality that is always illogical, sometimes funny, and often dangerous. This book, then, is about expertise. The immediate response from most people when confronted with the death of expertise is to blame the Internet. Professionals, especially, tend to point to the Internet as the culprit when faced with clients and customers who think they know better. As we’ll see, that’s not entirely wrong, but it is also too simple an explanation. Attacks on established knowledge have a long pedigree, and the Internet is only the most recent tool in a recurring problem that in the past misused television, radio, the printing press, and other innovations the same way. So why all the fuss? What exactly has changed so dramatically for me to have written this book and for you to be reading it? Is this really the “death of expertise,” or is this nothing more than the usual complaints from intellectuals that no one listens to them despite their self-anointed status as the smartest people in the room? Maybe it’s nothing more than the anxiety about the masses that arises among professionals after each cycle of social or technological change. Or maybe it’s just a typical expression of the outraged vanity of overeducated, elitist professors like me. Indeed, maybe the death of expertise is a sign of progress. Educated professionals, after all, no longer have a stranglehold on knowledge. The secrets of life are no longer hidden in giant marble mausoleums, the great libraries of the world whose halls are intimidating even to the relatively few people who can visit them. Under such conditions in the past, there was less stress between experts and lay people, but only because citizens were simply unable to challenge experts in any substantive way. Moreover, there were few public venues in which to mount such challenges in the era before mass communications. Participation in political, intellectual, and scientific life until the early 20th century was far more circumscribed, with debates about science, philosophy, and public policy all conducted by a small circle of educated males with pen and ink. Those were not exactly the Good Old Days, and they weren’t that long ago. The time when most people didn’t finish high school, when very few went to college, and when only a tiny fraction of the population entered professions is still within living memory of many Americans. Social changes only in the past half century finally broke down old barriers of race, class, and sex not only between Americans in general but also between uneducated citizens and elite experts in particular. A wider circle of debate meant more knowledge but more social friction. Universal education, the greater empowerment of women and minorities, the growth of a middle class, and increased social mobility all threw a minority of experts and the majority of citizens into direct contact, after nearly two centuries in which they rarely had to interact with each other. And yet the result has not been a greater respect for knowledge, but the growth of an irrational conviction among Americans that everyone is as smart as everyone else. This is the opposite of education, which should aim to make people, no matter how smart or accomplished they are, learners for the rest of their lives. Rather, we now live in a society where the acquisition of even a little learning is the endpoint, rather than the beginning, of education. And this is a dangerous thing. Excerpt from The Death of Expertise. Oxford University Press, 2017. Tom Nichols is the author of The Death of Expertise: The Campaign Against Established Knowledge and Why It Matters. He is professor of National Security Affairs at the U.S. Naval War College, an adjunct professor at the Harvard Extension School, and a former aide in the U.S. Senate. He is also the author of several works on foreign policy and international security affairs, including The Sacred Cause, No Use: Nuclear Weapons and U.S. National Security, Eve of Destruction: The Coming Age of Preventive War, and The Russian Presidency. Nichols’s website is tomnichols.net and he can be found on Twitter at @RadioFreeTom. There are three things, once one’s basic needs are satisfied, that academic literature points to as the ingredients for happiness: having meaningful social relationships, being good at whatever it is one spends one’s days doing, and having the freedom to make life decisions independently.